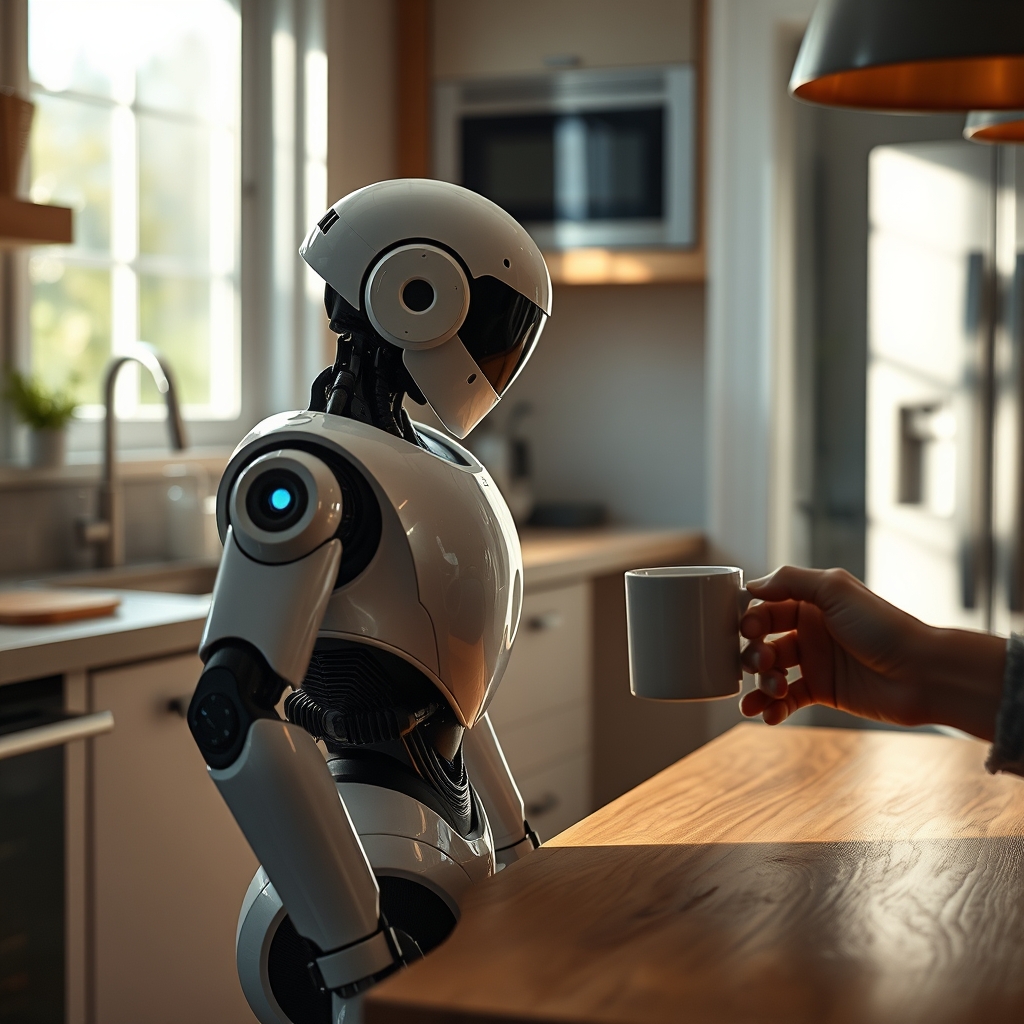

At the NVIDIA GTC 2026 conference, RealSense demonstrated a perception and reasoning platform that lets humanoid robots move through cluttered home spaces without colliding. The system fuses depth cameras, tactile feedback and on‑board AI to map a living‑room in real time, distinguishing a plush rug from a glass coffee table. In the demo, a sleek robot paused at a kitchen doorway, its servos humming softly as it scanned the warm wood grain of the countertop. A homeowner, coffee mug in hand, lingered a heartbeat, watching the machine adjust its stride to avoid the stray cat curled on the floor.

How RealSense's navigation system works in a home setting

The software builds a layered model of the environment: a coarse geometric scaffold for speed, overlaid with fine‑grained semantic tags for safety. This duality creates a tension between efficiency and protection—speed of movement must never compromise the robot's ability to stop before a child's toy or a delicate vase. By embedding this reasoning directly in the robot's controller, RealSense shifts the narrative from speculative gadgetry to a practical domestic assistant. The broader trend is clear: AI‑driven perception is becoming the connective tissue of the smart home, turning isolated appliances into a coordinated, responsive ecosystem.

It matters because it turns the promise of a robot assistant into a practical safety feature for daily living.